Seamless FPGA Acceleration: How a Video Streaming Company Scaled Real-Time Encoding on Flatcar

{Custom Kernel, Maximum Performance: A Case Study in FPGA-Driven Streaming on Flatcar Container Linux}

Introduction

In an industry driven by lightning-fast performance and minimal latency, video streaming services face massive engineering challenges—especially when streaming live events or 4K/8K content. High-resolution video encoding in real time demands robust infrastructure, sophisticated software, and specialized hardware. FPGAs (Field-Programmable Gate Arrays) have emerged as an attractive solution to offload compute-intensive encoding tasks from general-purpose CPUs. By programming FPGA boards with custom logic, organizations can achieve greater throughput at lower power consumption than CPU-only or GPU-based solutions.

This case study focuses on StreamX, a mid-sized video streaming platform known for delivering sporting events, concerts, and major live broadcasts in ultra-high definition. As user demand soared, StreamX discovered that CPU-based encoding was no longer cost-effective or scalable enough. They chose to integrate FPGAs into their server fleet to handle real-time encoding logic. Simultaneously, they adopted Flatcar Container Linux—a minimal, immutable operating system—combined with a custom kernel image to embed the necessary FPGA drivers and modules. This approach allowed them to rapidly scale new nodes while avoiding manual or ad hoc driver installation.

In the following pages, we explore the motivation behind StreamX’s migration, the technical architecture of combining FPGAs, containers, and Flatcar, the challenges encountered, and results gleaned from real-world deployment of thousands of FPGA-accelerated streaming sessions.

Background and Motivation

Why FPGAs for Video Encoding?

Traditionally, high-performance video encoding tasks might lean on general-purpose CPUs or GPUs. However, FPGAs present multiple advantages for specialized workloads like real-time encoding:

Custom Logic: FPGAs can be programmed to perform specialized tasks in parallel, which often yields better performance per watt than GPUs or multi-core CPUs.

Low Latency: FPGAs enable near-hardware-level access to data streams, reducing overhead.

Energy Efficiency: Offloading encoding logic to FPGAs can reduce overall power consumption, critical for data center cost optimization.

For StreamX, these advantages translated into the potential to handle more concurrent streams at higher resolutions, all while keeping operational and power costs manageable.

From Prototype to Production

StreamX’s research team initially tested an FPGA-based encoder prototype on a standard Ubuntu server. While the results were promising from a performance perspective, the environment was cumbersome:

Manual Driver Installs: Installing FPGA board drivers, libraries, and dependencies on each node required considerable hands-on management.

Frequent Updates: Security patches or kernel updates risked breaking the carefully orchestrated driver environment.

Configuration Drift: Over time, no two servers looked exactly the same. A server might have a slightly different driver version, firmware, or library installed.

Keen to avoid these pitfalls at scale, StreamX sought a more immutable, container-centric solution.

Choosing Flatcar Container Linux

What Is Flatcar?

Flatcar Container Linux is a lightweight, immutable OS designed explicitly for hosting containers at scale. Key features include:

Read-Only File System: Minimizes drift by preventing accidental changes to OS partitions.

Atomic Updates: The OS updates itself via an A/B partition scheme—if an update fails, it rolls back automatically.

Minimal Packages: Ships with just enough software to run containers, limiting attack surface and maintenance overhead.

For StreamX, the biggest draw was the concept of immutability and automatic patching. They wanted each node to be as consistent as possible, with no ad hoc installations needed to accommodate FPGA drivers.

Custom Kernel Modules

However, using FPGAs requires kernel drivers and vendor-supplied libraries. With a typical read-only OS approach, installing these drivers after boot can be tricky or ephemeral. Instead, StreamX decided to build a custom Flatcar image embedding these kernel modules:

Vendor FPGA Drivers: Provided a .ko file or set of .ko files that integrated into the Linux kernel.

Firmware Binaries: Any bitstreams or support files that the FPGA boards needed for initialization.

Libraries: Some userland libraries to communicate with the kernel driver (though these could also run in containers).

Technical Architecture Overview

FPGA Encoding Flow

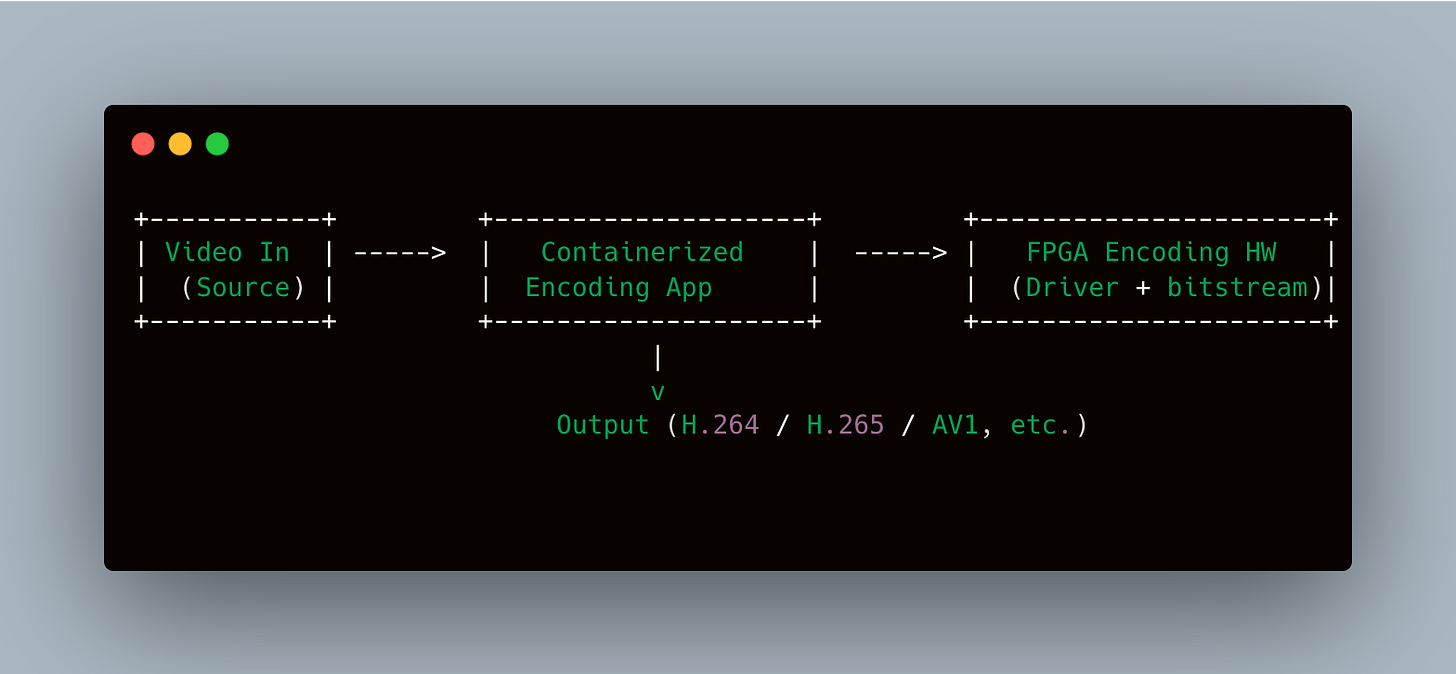

In essence:

Containerized Encoding App: A Docker container that runs a specialized encoding service, linking to the FPGA driver libraries.

Kernel Modules: Integrated at the OS level so the container can request hardware acceleration.

FPGA Board: A PCIe card installed in each server that processes raw video frames and outputs compressed streams.

Custom OS Build Process

Packer or a similar tool was used to create a custom Flatcar image:

Start with flatcar-stable as the base.

Unpack the OS image.

Insert additional kernel modules (.ko files) into a designated read/write partition.

Adjust Ignition or Butane config to load these modules at boot, or build them into

/usr/lib/modulesif the OS design allows.Repackage the image and upload to a private repository or AMI (if using AWS).

# Example partial packer.json

{

"builders": [

{

"type": "amazon-ebs",

"source_ami": "ami-xyz", # official Flatcar AMI

"region": "us-east-1",

"ssh_username": "core"

}

],

"provisioners": [

{

"type": "shell",

"inline": [

"mkdir -p /opt/fpga-drivers",

"cp fpga.ko /opt/fpga-drivers/",

"echo 'fpga' >> /etc/modules-load.d/fpga.conf"

]

}

]

}

Upon boot, each node automatically loads the fpga.ko module, ensuring the hardware is recognized immediately, removing the need for manual driver installs.

Deployment and Scalability

Rolling Out to Data Centers

StreamX’s data centers are spread across multiple regions for redundancy. They used Terraform to specify node definitions. Each node references the custom Flatcar AMI and a user data snippet that configures Docker, GPU/FPGA telemetry, and logging.

Terraform snippet (simplified):

resource "aws_instance" "fpga_nodes" {

count = 10

ami = var.custom_flatcar_ami

instance_type = "c5.xlarge" # replaced with FPGA-enabled instance

user_data = file("${path.module}/flatcar_ignition.json")

# ...

}

When new capacity is required (e.g., major sporting event or a new show release), they can spin up additional nodes referencing the same custom AMI. Each node boots with the same FPGA drivers and OS config, so they are production-ready in minutes.

Kubernetes Integration

While some teams run pure Docker with systemd, StreamX uses Kubernetes extensively. They define a device plugin for the FPGA hardware, letting containers request hardware acceleration just like they might request GPUs. The Kubelet on each node registers an “fpga resource,” so workloads with a container spec like:

resources:

limits:

fpga.accelerator.vendorX: 1

can be scheduled on a node with an available FPGA slot. This keeps cluster scheduling dynamic while ensuring no single node gets over-committed.

Challenges and Lessons Learned

Driver/Kernel Mismatch

The engineering team initially struggled with ensuring the FPGA vendor drivers matched the exact kernel version in the stable channel of Flatcar. They had to coordinate with the vendor to get an updated driver set each time a new kernel was rolled out.

Atomic Updates

Automatic OS updates are a blessing for security. However, if an FPGA driver is not updated in sync, the node might fail to load the old driver. StreamX solved this by controlling the update rollout carefully, verifying the new image in staging before letting update-engine apply it cluster-wide.

Firmware Flashes

Some FPGA boards require periodic firmware flashes. While this can be done from the host, the read-only design of Flatcar complicates it. Ultimately, they used ephemeral containers with the vendor’s firmware utility, ensuring the firmware update runs in a controlled, stateless environment.

Monitoring & Telemetry

FPGAs produce hardware-level metrics (e.g., temperature, usage, bit error rates). Gathering these required specialized commands integrated into a container-based monitoring agent. The agent read from

/dev/fpga0or similar device nodes.

Node Replacement Strategy

If a node experiences hardware failure, they found it faster to replace the node entirely—spin up a new instance with the custom image—rather than diagnosing the OS or re-installing drivers. The immutability concept encourages a “pet vs. cattle” approach: treat nodes as disposable resources.

Operational Outcomes

Performance Gains

By offloading encoding to FPGAs, StreamX saw:

40% Lower CPU Usage: Freed CPU cores handle other microservices or orchestration tasks.

50-60% Lower Latency: Real-time encoding pipelines finished frames drastically faster, improving live streaming reliability.

Better Power Efficiency: While FPGAs consume power, they are more efficient for specialized encoding than general-purpose CPU clusters performing the same task.

Simplified Scalability

The custom image approach let StreamX add new FPGA nodes with minimal friction. As soon as a node boots, the OS:

Loads the integrated kernel modules

Registers the FPGA device

Pulls relevant container images for the encoding pipeline

Joins the Kubernetes cluster (if configured to do so automatically)

This pipeline means nodes go from zero to production-ready in minutes, with consistent configurations across the fleet.

Production Observability

StreamX extended their existing Grafana + Prometheus stack to track FPGA metrics, container logs, and host kernel logs. They can see at a glance how many channels each node is encoding concurrently, plus the average processing time per frame. Any anomalies in driver logs or kernel messages raise alerts in Slack or PagerDuty.

Case Study Success Highlights

Reduced Manual Driver Installs

By baking drivers into a custom Flatcar image, StreamX avoided repetitive tasks, ensuring every node was identically configured.

Resilient OS Updates

The atomic update mechanism meant that if a kernel + driver mismatch was introduced, a node could roll back easily. This minimized downtime and “breaking changes.”

Modular Container Approach

The actual encoding logic resides in containers. If new features are needed—say support for a new codec—only the container image changes. The base OS + driver remains stable, providing a consistent hardware interface.

Edge Cases (Power Cuts, Kernel Panics)

If a node fails mid-update or experiences a kernel panic, it reboots into the last working partition. In rare instances, the FPGA driver might fail to load, but typically the fallback ensures minimal disruption to overall streaming capacity.

Looking Ahead

As more streaming providers move to 8K or VR/AR content, FPGAs promise a path toward more specialized acceleration. StreamX has begun collaborating with FPGA vendors to integrate next-gen bitstreams for advanced video codecs (AV1, VVC). The success of the Flatcar-based approach encourages them to remain on a container-oriented, immutable path, simplifying environment upgrades and expansions.

They also foresee more dynamic multi-cloud or on-prem/hybrid deployments, where new FPGA nodes can spin up automatically in whichever region sees the highest concurrency. This requires robust orchestration and flexible network setups, but the fundamental building block—Flatcar + custom kernel modules—remains a stable anchor for future growth.

Conclusion

By adopting FPGAs for real-time video encoding, StreamX tackled the immense throughput demands of live streaming and high-resolution VOD content. Coupled with Flatcar Container Linux (in a custom build containing FPGA drivers), they achieved a consistent, automated environment that scales seamlessly. The immutable OS principle drastically cut down on manual driver installs, minimized OS drift, and aligned well with container best practices.

Key Takeaways:

Specialized Hardware: FPGAs can deliver superb performance for targeted compute tasks like encoding, but require careful driver management to avoid friction.

Immutability: A read-only OS with atomic updates significantly reduces the risk of partial or conflicting installations.

Custom Kernel: Baking vendor modules into the OS image ensures every new node is ready for hardware acceleration out of the box.

Containerized Pipelines: Splitting hardware drivers (OS-level) and application logic (containers) fosters rapid iteration. Updating one doesn’t threaten the other.

Scalability: Both from an operational standpoint (treat servers as disposable) and a performance perspective (more concurrency at lower CPU overhead).

This synergy of FPGAs and Flatcar points toward an exciting future for specialized workloads. The project’s success story underlines how, with thoughtful design and consistent tooling, next-level performance and high availability can be achieved—even for high-bandwidth, real-time encoding tasks.