Nailing Zero Errors: A Streaming Giant’s Journey with Liveness Probes and Rapid Failover

From Forced Reboots to Flawless Streams: How Better Probes and Failover Saved a Media Platform

Introduction

In the era of always-on content consumption, a few seconds of downtime can translate into frustrated users and lost revenue. Media streaming platforms, in particular, face heightened user expectations around stability—any hiccup in the stream, and subscribers quickly look elsewhere. This case study stems from one of my consulting project for streaming company which follows a major media streaming platform (Lets call it “StreamFlow”) that experienced a significant uptick in user errors—roughly a 10% spike—when one of their nodes was forcibly rebooted during a chaos engineering experiment. StreamFlow’s engineering teams realized that their readiness and liveness probe configurations were incomplete, and that they lacked robust failover procedures when nodes dropped out. By refining these Kubernetes probes and improving failover, they reduced user-facing errors to nearly zero in subsequent forced reboot scenarios, ultimately bolstering user trust and platform resilience.

Company Background

The Subscription Boom

StreamFlow started as a niche video-on-demand service but ballooned in popularity once they secured lucrative distribution rights for various live sports events and original programming. Their infrastructure began modestly—traditional virtual machines hosting monolithic services—but quickly shifted to a microservices-based approach on Kubernetes. This modernization helped them scale during peak events and handle dynamic user loads with more agility.

Challenges

Despite the improvements in elasticity, StreamFlow often struggled to handle transient outages—like node reboots or container restarts. Their environment, while containerized, was not fully optimized for real-time resilience. Users frequently saw buffering messages or error codes (“Video Unavailable,” “Service Temporarily Down”) during even short disruptions. With new streaming competitors appearing monthly, StreamFlow recognized that any user frustration could trigger subscription cancellations.

The Reboot Incident: A 10% Error Spike

Chaos Engineering Experiment

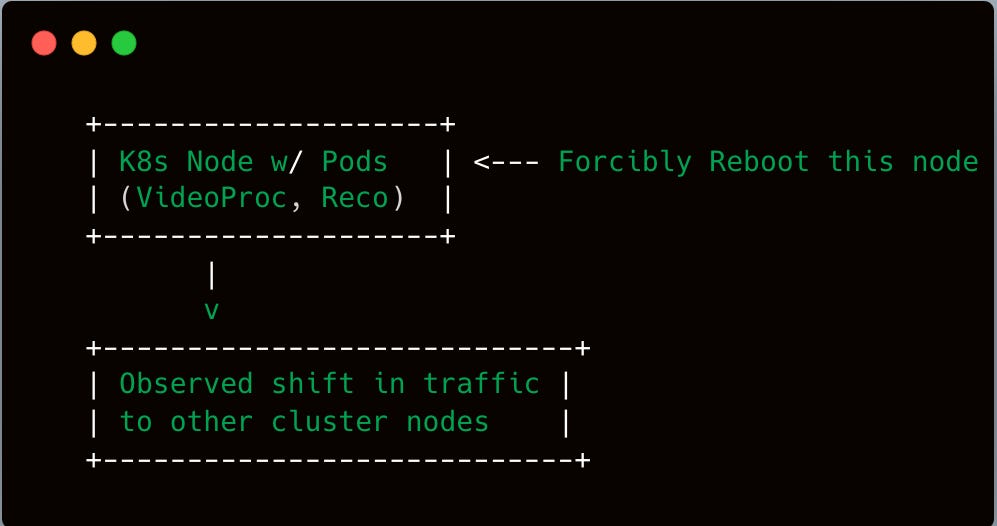

In an effort to build confidence in their platform’s resilience, StreamFlow’s Site Reliability Engineering (SRE) team introduced chaos engineering experiments. One such test involved forcibly rebooting one of their key streaming nodes during a live event simulation. The node hosted multiple microservices: part of the video processing pipeline, user authentication fallback, and a recommendations engine.

Planned Scenario: The SRE team scheduled a forced reboot in the staging environment first. Observations were encouraging—services gracefully shifted to other nodes with minimal error rates.

Production Trial: Confident in those staging results, they decided to do a carefully monitored forced reboot in the production environment under moderate user load.

Impact: 10% User Error Spike

Unexpectedly, during the forced reboot:

10% of active sessions encountered errors. These manifested as “stream not found” or “internal error” messages within 3-4 seconds of the node going offline.

Another subset of sessions saw buffering that lasted up to 10 seconds before the stream resumed.

While 10% may not sound catastrophic, it was substantial enough to cause a flurry of user complaints on social media and an internal executive escalation. For a platform streaming to tens of thousands of concurrent viewers, 10% represented a large cohort of frustrated customers.

Command Output snippet from logs:

[time=2024-07-15T16:42:10Z] Pod restart triggered

[time=2024-07-15T16:42:12Z] 10% error spike detected in the video processing service

[time=2024-07-15T16:42:15Z] Node forcibly rebooted

Root Cause Analysis

Probes Configuration Gaps

Digging into the logs, the SRE team discovered that several microservices running on the forcibly rebooted node had insufficient or misconfigured readiness and liveness probes:

Readiness: Some pods marked themselves “ready” too soon, before they had fully loaded essential libraries or established network connections to the rest of the streaming pipeline.

Liveness: A few microservices used simplistic liveness checks—merely checking if the container’s main process was running, not verifying if essential background threads were healthy.

Due to these incomplete probes, Kubernetes believed certain pods were ready to serve traffic immediately upon scheduling them on other nodes. In reality, these pods were still warming up or waiting for dependencies. This mismatch caused requests to fail or return errors.

Slow Failover Timers

In addition to probes, the SRE team uncovered slow failover timers:

Their load balancer waited up to 5-10 seconds to mark a node as “unhealthy.”

Once a node was offline, traffic rerouting was delayed by these health-check intervals.

Hence, even though the forcibly rebooted node was obviously down, traffic continued heading its way for a short period, leading to dropped connections or incomplete streams.

Service Dependencies

Another factor: the microservices on this node were part of the streaming pipeline’s critical path. If the user authentication fallback service or the real-time video processing module was unreachable, the entire stream session would fail. The team realized they needed more robust fallback logic.

Refining Probes and Failover

Step 1: Enhanced Readiness and Liveness Checks

StreamFlow implemented deeper checks:

Readiness:

Verified the microservice could connect to its upstream or database.

Ensured the application logic had completed initialization (e.g., any caches were populated).

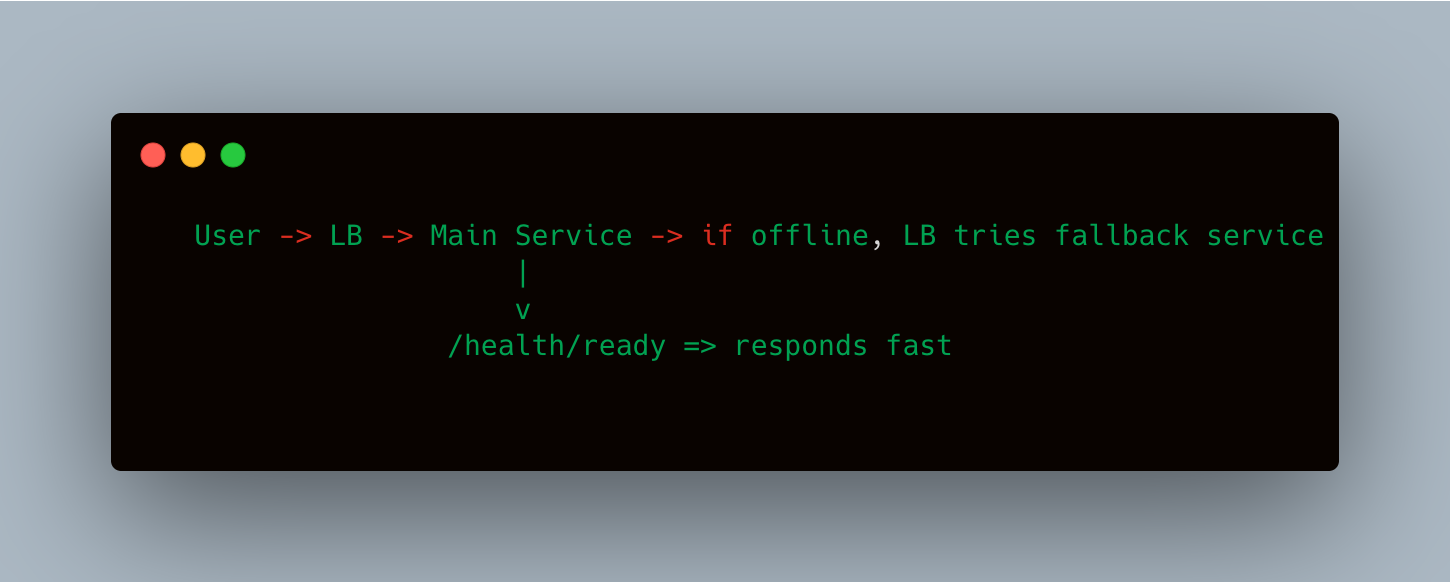

Implemented “health endpoints” returning relevant HTTP codes once the service was truly ready.

Liveness:

Periodically polled a more robust “/health/liveness” endpoint that tested internal threads or queue backlogs.

If the microservice was stuck (e.g., a deadlock in the video processing pipeline), the endpoint returned a failure code, prompting Kubernetes to restart the container.

Code Snippet (Example readiness endpoint in Node.js):

app.get('/health/ready', async (req, res) => {

// Check database connectivity

const dbStatus = await db.ping();

// Check essential in-memory cache

if (!cache.isWarm) {

return res.status(503).json({ status: 'Not ready yet' });

}

// If all checks pass

return res.status(200).json({ status: 'Ready' });

});

Step 2: Faster Node Unhealthy Marking

The platform reduced the load balancer’s grace period from 10 seconds to around 3 seconds in some scenarios. This meant if a node or service didn’t respond promptly, the load balancer flagged it as unhealthy more quickly, and traffic rerouted to healthy instances.

Command Output from a sample AWS ALB or NGINX ingress config:

healthCheck:

intervalSeconds: 3

timeoutSeconds: 2

healthyThresholdCount: 2

unhealthyThresholdCount: 2

Step 3: Additional Fallback Logic

The SRE team introduced a small “fallback service” within the streaming pipeline. If the main pipeline service was offline, the fallback service could provide a simplified stream route or a “we’re reconnecting” message to reduce abrupt user-facing errors. This minimized “hard fails” that kicked viewers out of the session.

Post-Fix Testing and Results

Simulating Node Reboots Again

Armed with refined probes, faster failover, and fallback logic, StreamFlow repeated the forced reboot chaos experiment:

Node Reboot: SRE forcibly shut down the node hosting the same microservices as before.

Traffic Reroute: The load balancer flagged the node as unhealthy within a few seconds, automatically routing new requests to other nodes.

Minimal Errors: Observed user-facing errors dropped from 10% to nearly zero—some users experienced a momentary buffering or a short message indicating the stream was reconnecting, but no permanent failures.

Metrics in Grafana

Grafana dashboards revealed that while the node was offline, no large error spike was observed across the microservices:

Error Rate: 0.2%–0.3% short-lived spike, drastically lower than 10%.

Session Buffering: Went from up to 10 seconds to a typical 1–2 seconds max.

Conclusion: By refining readiness/liveness probes and adopting a more agile failover approach, user impact from node reboots became negligible. The difference was stark: from an entire subset of the user base receiving error codes to just a tiny fraction seeing minimal buffering.

Organizational Impact

User Confidence

While StreamFlow didn’t publicly announce these improvements, internal data showed fewer churn-related tickets referencing “stream downtime.” The technology and customer support teams also reported fewer escalations during planned or unplanned outages.

Cultural Shift to Resilience Testing

Seeing the tangible results of chaos engineering validated SRE efforts. More squads began scheduling chaos experiments—like random container kills or partial network throttling—to ensure that the improved readiness/liveness patterns worked across the entire microservice ecosystem.

Foundation for Future Optimizations

The changes also laid the groundwork for advanced tactics:

Circuit Breakers: If the fallback service or main pipeline is overwhelmed, the system can degrade gracefully.

Horizontal Pod Autoscaling: Probes now accurately reflect when pods are truly saturated or struggling, allowing autoscalers to make better decisions.

Challenges & Lessons Learned

Underestimating the Complexity of Probes

Many devs had assumed an HTTP

200 OKresponse was sufficient. But “OK” might not mean “fully initialized.” Deeper checks ensure a service only marks itself ready once critical dependencies are stable.

Balancing “Fast” vs. “Safe” Failover

Aggressively short health-check intervals can create false positives if a node experiences small network hiccups. StreamFlow tested different intervals to strike a balance: quick detection without flapping states.

Ensuring All Dependencies Are Probed

The shipping microservice example in a different scenario had shown partial readiness can cause hidden slowdowns. Here, the media pipeline had to confirm that the video transcoding library, session DB connections, and caching layers were all functional. Miss one, and you risk partial readiness.

Communicating to Other Teams

The SRE team realized that readiness/liveness improvements only help if each microservice’s development squad embraces them. Training sessions were held to unify how readiness endpoints are coded and tested.

Final Reflections

For StreamFlow, the forcibly rebooted node was more than just a chaos engineering test—it was a wake-up call. The 10% error spike laid bare the hazards of insufficient readiness and liveness probes in a microservices environment reliant on near-instant failover. But within weeks, the team demonstrated how thoughtful enhancements can transform a precarious user experience into a near-seamless event.

Key Takeaways:

Probes Are Powerful

Properly configured readiness/liveness checks ensure that pods serve traffic only when they’re genuinely prepared, minimizing user disruptions when nodes vanish.Chaos Engineering ROI

The forced reboot incident proved invaluable in highlighting hidden flaws. Systemic improvements ripple out to all forms of downtime, whether planned or unexpected.Failover Speed Matters

Users expect streaming continuity. A slow health check or load balancer adjustment can cost valuable user trust. Tightening these intervals (without incurring flapping) is a crucial balancing act.Incremental Gains

Reducing errors from 10% to near zero didn’t happen by magic—it required iterative improvements, retesting, and cross-team alignment. The payoff, however, was immediate: fewer support tickets, greater reliability metrics, and internal confidence that the platform can handle forced reboots with minimal fuss.

As they look ahead, StreamFlow intends to expand their chaos engineering scope—testing not just single-node reboots but also partial cluster outages, network partitioning events, and ephemeral container restarts in the middle of a high-traffic event. With readiness and liveness probes now dialed in, they feel far more prepared to meet those challenges.

Conclusion

This case study highlights how a 10% error spike under a forced reboot scenario uncovered deeper issues in readiness and liveness probe configurations at a major media streaming platform. By systematically refining these probes and speeding up failover processes, the team effectively slashed user-facing error rates to near zero in subsequent chaos tests. The journey underscores that, in a microservices world, minor misconfigurations can balloon into real-world disruptions—but also that rapid iteration, proper chaos testing, and cross-team communication can yield robust, fail-safe streaming experiences for millions of viewers.

Whether your platform is streaming sports events or hosting live concerts, ensuring each node can gracefully handle downtime is fundamental. And by adopting these same probe best practices and failover strategies, you too can minimize user impact whenever hardware or software gremlins inevitably strike.