Part 2: Structuring Ultra-Large Codebases: Version Control, Dependencies, and Collaboration

Newsletter Series on Structuring Ultra-Large Codebases: Version Control, Dependencies, and Collaboration

Version Control, Dependencies, and Cross functional Collaboration

In this second part of the newsletter series, we will dive deep into some of the fundamental aspects of structuring ultra-large codebases, including version control, dependency management, code reviews, and collaboration protocols. We’ll also explore testing strategies for hybrid systems and how Continuous Integration and Continuous Deployment (CI/CD) pipelines can be set up for both legacy and modern components.

6. Version Control in Ultra-Large Codebases

6.1 Challenges of Version Control for Legacy Systems

Legacy systems present unique challenges for version control. Many of these systems were developed before modern version control systems (VCS) like Git were widely adopted, meaning they may use outdated or even non-existent version control practices. Here are some common challenges:

Lack of Versioning History: Many legacy codebases may not have consistent version control history, making it difficult to track changes or roll back to a stable state. Some legacy systems still rely on manual backups of codebases, stored in directories labeled with dates or versions, making it difficult to manage incremental changes.

Mixed Development Environments: Large legacy systems often span multiple platforms, languages, and environments. For instance, a banking system may use COBOL for transaction processing and Java for user interfaces. Managing version control for such diverse environments requires a sophisticated setup to prevent conflicts.

Incompatibility with Modern VCS Tools: Many legacy systems were initially built with tools like CVS or SVN, which lack the modern branching and merging capabilities of Git. Migrating these systems to modern tools while preserving their history is often complex and requires careful planning.

Team Size and Distribution: As businesses scale, development teams grow and become distributed. Managing version control for hundreds or even thousands of developers working on the same codebase requires well-defined processes for collaboration and code reviews.

6. 2 Best Practices for Version Control in Hybrid Environments

To manage version control for hybrid systems, which include both legacy and modern components, consider the following best practices:

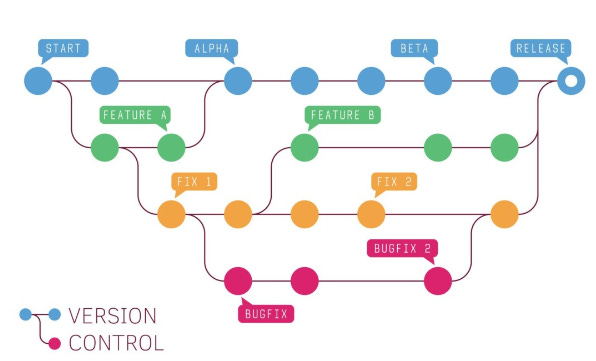

Use Git as a Standard: Git provides powerful branching, merging, and rollback capabilities that are essential for managing large codebases. It’s essential to migrate legacy systems from older tools (e.g., SVN) to Git to enable more efficient collaboration.

Implement Feature Branching: Feature branching allows developers to work on isolated features without affecting the main codebase. This is particularly important for legacy systems where stability is critical, and it helps prevent disruptive changes.

Maintain a Clean Main Branch: The

main(ormaster) branch should always reflect the latest stable version of the system. Developers should ensure that only thoroughly tested code is merged into this branch.Continuous Integration with Git: Integrate Git with continuous integration tools like Jenkins, GitLab CI, or Travis CI to automate builds and testing, ensuring that new changes don’t introduce bugs or break functionality.

6.3 Migrating Legacy Codebases to Git from Older Systems Like SVN

Migrating from SVN (or other legacy version control systems) to Git can be a daunting task, especially for large legacy systems. Below is a step-by-step guide to help with this migration process:

Step 1: Install Git and SVN

Make sure that both SVN and Git are installed on your system. You’ll need both tools to migrate your codebase.

Step 2: Clone the SVN Repository

To begin the migration, you first need to clone the SVN repository using Git’s built-in SVN functionality:

git svn clone http://your-svn-repository-url/project-name --stdlayout --no-metadata -A authors-transform.txt --prefix=svn/ -T trunk -b branches -t tags

--stdlayoutassumes that your SVN repository follows the standard layout withtrunk,branches, andtagsdirectories.-A authors-transform.txtis used to map SVN authors to Git authors.The

--no-metadataoption skips adding SVN metadata to your commits.

Step 3: Verify and Clean Up the Imported Git Repository

After the import is complete, you’ll need to verify the integrity of the imported Git repository and clean up any SVN-related metadata:

git log --oneline --graph --allEnsure that the history looks correct, and there are no missing commits.

Step 4: Push to a Remote Git Repository

Once everything looks correct, you can push the repository to a remote Git server:

git remote add origin https://your-git-server.com/your-repository.git

git push -u origin --allCode Example: Git Commands for SVN to Git Migration

Here’s a detailed example of SVN to Git migration:

# Step 1: Install git and svn

sudo apt-get install git svn

# Step 2: Clone SVN repository into a local Git repository

git svn clone https://svn.example.com/repo/project-name \

--stdlayout \

--no-metadata \

--prefix=svn/ \

--trunk=trunk \

--branches=branches \

--tags=tags

# Step 3: Inspect and clean up the repository

git log --oneline --graph --all

# Step 4: Push the local Git repository to a remote server

git remote add origin https://github.com/username/repository.git

git push origin --all

git push origin --tags

Best Practices for SVN to Git Migration

Preserve History: During migration, ensure that the entire commit history is retained. This is important for future debugging and tracking the evolution of the codebase.

Map Authors Correctly: Use an author mapping file to ensure that author information is correctly translated from SVN to Git.

Test Thoroughly: After migration, thoroughly test the codebase to ensure that everything works as expected.

7 Dependency Management and Code Decoupling

7.1 Managing Dependencies in Large Legacy and Modern Systems

Legacy systems often have deeply embedded dependencies that are tightly coupled with various parts of the codebase. In contrast, modern systems tend to favor loosely coupled components with explicit dependency management. The challenge in hybrid environments is managing dependencies across both legacy and modern systems while ensuring that changes in one part of the system don’t break other parts.

7.2 Common Challenges in Dependency Management:

Transitive Dependencies: Legacy systems often have hidden dependencies that are not explicitly documented, making it difficult to track how changes in one library affect the system.

Conflicting Dependencies: In hybrid systems, legacy and modern components may rely on different versions of the same library, leading to conflicts.

Lack of Dependency Management Tools: Many legacy systems lack formal dependency management tools, making it difficult to automatically track and update dependencies.

7.3 Using Dependency Injection to Decouple Components

Dependency injection (DI) is a design pattern that allows developers to pass dependencies (such as services, libraries, or components) into objects rather than having the objects instantiate those dependencies themselves. This is particularly important for decoupling components in large systems, as it allows different parts of the system to evolve independently without breaking other parts.

Benefits of Dependency Injection:

Loose Coupling: Components can be developed and tested independently, reducing the likelihood of introducing bugs when changes are made.

Flexibility: New features can be integrated without modifying existing code.

Improved Testability: Since dependencies can be injected, they can also be mocked during unit tests, improving test coverage.

Code Example: Implementing Dependency Injection in Python

Here’s a simple example of using dependency injection in Python to decouple components in a large system.

class DatabaseConnection:

def connect(self):

return "Connected to the database"

class UserRepository:

def __init__(self, db_connection):

self.db_connection = db_connection

def get_user(self, user_id):

connection = self.db_connection.connect()

return f"Fetching user {user_id} from {connection}"

# Dependency Injection using a constructor

db_connection = DatabaseConnection()

user_repository = UserRepository(db_connection)

# Now we can retrieve users without worrying about the internal connection logic

print(user_repository.get_user(1))

In this example, UserRepository does not need to know the details of how DatabaseConnection works. Instead, it receives the DatabaseConnection object via its constructor. This allows DatabaseConnection to be swapped out for a different implementation without modifying UserRepository.

7.4 How Decoupling Makes It Easier to Integrate New Features

In ultra-large codebases, tightly coupled components can make it difficult to introduce new features because any change in one component may require changes in multiple other components. By decoupling components, developers can work on new features in isolation, reducing the risk of introducing bugs in unrelated parts of the system.

Case Study: Decoupling in Legacy Banking Systems

Many legacy banking systems are monolithic and tightly coupled, meaning that a change to the transaction processing system may require changes to the reporting system, the UI, and other unrelated parts. To modernize these systems, banks have started decoupling their core functions into microservices that can be independently deployed and scaled.

For example, by decoupling the transaction processing system from the reporting system using an API layer, banks can introduce new reporting features without modifying the core transaction logic.

8. Code Reviews and Collaboration Protocols

8.1 Best Practices for Conducting Code Reviews in Large, Distributed Teams

Code reviews are critical for maintaining code quality and ensuring that best practices are followed. In large, distributed teams, code reviews become even more important because developers may not be familiar with all parts of the codebase or may work in different time zones.

Best Practices:

Use a Standardized Code Review Checklist: Establish a checklist of common things to look for during code reviews, such as code readability, performance, security, and compliance with company coding standards.

Automate Static Code Analysis: Tools like SonarQube or Codacy can automatically analyze code for common issues, such as security vulnerabilities or code smells.

Establish a Peer Review Process: Pair junior developers with senior engineers to encourage knowledge sharing and mentorship during the code review process.

Encourage Early Reviews: Don’t wait until a feature is fully developed before initiating a code review. Encourage developers to submit code for review early in the development process to catch issues sooner.

8.2 Collaboration Strategies Between Legacy System Maintainers and Modern Dev Teams

In many organizations, legacy system maintainers and modern development teams work in silos, making it difficult to collaborate effectively. However, as systems become more integrated, collaboration becomes essential.

Collaboration Strategies:

Cross-Functional Teams: Create cross-functional teams that include both legacy system maintainers and modern developers. This ensures that both groups understand the dependencies between systems and can work together to resolve issues.

Shared Documentation: Ensure that both teams have access to shared documentation that explains how the legacy and modern systems interact.

Joint Standups and Planning Sessions: Include legacy system maintainers in standups and planning sessions for modern systems, and vice versa, to ensure that both teams are aligned on priorities and dependencies.

8.3 How Companies Like Facebook and Google Handle Code Review for Large Teams

Both Facebook and Google have large, distributed engineering teams, and they have developed sophisticated processes for code review and collaboration. Here are some key strategies they use:

Code Ownership: At Google, each part of the codebase has a designated owner who is responsible for reviewing changes to that code. This ensures that changes are always reviewed by someone familiar with that part of the system.

Phabricator at Facebook: Facebook developed an in-house code review tool called Phabricator, which streamlines the review process and integrates with their version control system.

Continuous Code Review: At both companies, code reviews are part of the continuous integration pipeline, ensuring that changes are always reviewed before they are merged into the main branch.

9. Testing and Continuous Integration for Ultra-Large Codebases

9.1 Testing Strategies for Legacy and Modern Hybrid Systems

Testing large, hybrid systems can be challenging because different parts of the system may have different testing requirements. For example, legacy systems may lack automated tests, while modern systems may use test-driven development (TDD).

Testing Strategies:

Unit Testing for Legacy Systems: Begin by adding unit tests to legacy systems, focusing on critical components. Use mocking and dependency injection to isolate components during testing.

Integration Testing for Hybrid Systems: Ensure that new features in modern systems are thoroughly tested against legacy systems using integration tests. These tests should validate that the API layers, message queues, and other communication channels work correctly.

Regression Testing: For both legacy and modern systems, regression testing is essential to ensure that new changes don’t introduce bugs. Automated regression testing tools can help reduce the manual effort required to test large codebases.

End-to-End Testing: For complex systems with multiple integrations, end-to-end tests are necessary to validate the entire workflow, from user input to back-end processing.

9.2 How to Set Up a CI/CD Pipeline for Both Legacy and Modern Components

Continuous integration (CI) and continuous deployment (CD) are essential for maintaining ultra-large codebases because they automate the testing and deployment process, ensuring that new changes are automatically tested and deployed.

9.2.1 Setting Up a CI/CD Pipeline with GitLab CI

Here’s a step-by-step guide to setting up a CI/CD pipeline for a hybrid system with both legacy (e.g., COBOL, Ruby) and modern components (e.g., Python, LISP):

Install GitLab CI Runners: First, install GitLab CI runners on the servers where your code will be built and tested.

sudo gitlab-runner registerCreate a

.gitlab-ci.ymlFile: The.gitlab-ci.ymlfile defines the stages of the CI/CD pipeline. Below is an example that includes stages for building, testing, and deploying both legacy and modern components.stages: - build - test - deploy build_legacy: stage: build script: - cobol-compiler -c src/legacy.cobol -o build/legacy.out build_modern: stage: build script: - pip install -r requirements.txt - python setup.py build test_legacy: stage: test script: - cobol-test-runner build/legacy.out test_modern: stage: test script: - pytest tests/ deploy: stage: deploy script: - ./deploy.shTrigger CI/CD Pipeline: Once your

.gitlab-ci.ymlfile is ready, push your code to the GitLab repository. The CI/CD pipeline will be triggered automatically.git add .gitlab-ci.yml git commit -m "Add CI/CD pipeline" git push origin master9.3 Code Example: Setting Up a GitLab CI Pipeline to Test and Deploy COBOL, Ruby, LISP, and Python Services

Here’s an expanded GitLab CI configuration to handle multiple languages:

stages: - build - test - deploy build_cobol: stage: build script: - cobol-compiler src/cobol/*.cobol -o build/cobol.out build_ruby: stage: build script: - bundle install - ruby build_script.rb build_lisp: stage: build script: - sbcl --load src/lisp/build_script.lisp build_python: stage: build script: - pip install -r requirements.txt - python setup.py build test_cobol: stage: test script: - cobol-test-runner build/cobol.out test_ruby: stage: test script: - rspec test_lisp: stage: test script: - sbcl --script src/lisp/test_script.lisp test_python: stage: test script: - pytest tests/ deploy: stage: deploy script: - ./deploy.shBy using this pipeline, you can automate the build, testing, and deployment process for both legacy and modern components, ensuring that your hybrid system is always in a deployable state.

5 Detailed Case studies from different Industries focus on how finance, aerospace, healthcare, energy and utilities, and telecom sectors have applied principles such as version control, dependency management, collaboration protocols, and CI/CD to improve their workflows and manage large, complex codebases.

Case Study 1: Finance Sector – Goldman Sachs: Migrating Legacy Systems to Git

Background:

Goldman Sachs, a major financial institution, has been operating for over 150 years. Over this time, the company built a vast collection of legacy systems, with codebases dating back decades. One of the critical challenges for Goldman Sachs was managing version control for these legacy systems, which were spread across different regions and used tools like SVN and CVS. The bank was also building modern, cloud-native applications on top of these systems, adding to the complexity.

Challenge:

Goldman Sachs needed to modernize its version control system by migrating its legacy code from SVN to Git. The challenge was to preserve the commit history and ensure that the migration process did not disrupt ongoing development. Another challenge was that some teams were still actively developing in COBOL, which required careful integration with modern systems.

Solution:

Goldman Sachs decided to incrementally migrate its systems to Git, using a branch-by-branch strategy. This allowed the teams to move specific features and business domains to Git while keeping SVN operational during the migration.

Version Control Migration: A specialized team was set up to handle the migration from SVN to Git. This team used automated scripts to ensure that the history was preserved and conflicts were minimized.

Maintaining History: The migration preserved over 10 years of version control history, which was crucial for debugging and auditing purposes.

Cross-Team Collaboration: Goldman Sachs also introduced a robust collaboration protocol, where legacy maintainers and modern development teams regularly synchronized via shared repositories and joint code reviews.

Key Takeaways:

Gradual Migration: Incremental migration allowed Goldman Sachs to avoid disrupting critical business processes.

Cross-Functional Teams: Establishing a cross-functional team for the migration helped ensure that both legacy system maintainers and modern developers were on the same page.

Insights for Application:

Financial institutions with legacy systems can adopt similar migration strategies by incrementally moving codebases to modern version control systems like Git. Additionally, cross-team collaboration ensures that new development teams can seamlessly integrate with legacy systems.

Case Study 2: Aerospace Sector – Lockheed Martin: Dependency Management in Spacecraft Systems

Background:

Lockheed Martin, a leading aerospace company, develops complex systems for space exploration, including spacecraft and satellites. These systems rely on codebases that include both FORTRAN and Ada, legacy programming languages that were used for flight control systems. With the introduction of modern onboard software written in C++ and Python, managing dependencies across these codebases became a significant challenge.

Challenge:

Lockheed Martin faced difficulty managing dependencies between legacy flight control software and modern AI-driven systems used for autonomous navigation. The team needed to decouple components to prevent changes in modern systems from inadvertently affecting legacy code.

Solution:

Lockheed Martin implemented a dependency injection framework that allowed the separation of concerns between the legacy flight control system and the new autonomous navigation systems. They also introduced an automated dependency management tool that could scan the entire codebase for dependency conflicts and notify developers in real time.

Decoupling Components: By decoupling components and introducing interfaces, the team could modify modern systems without touching the legacy flight control systems.

Automated Dependency Scanning: Lockheed Martin used Artifactory for managing dependencies and automating the process of version management for libraries used across different systems.

Key Takeaways:

Decoupling Systems: Decoupling legacy and modern systems through dependency injection reduced the risk of errors when making updates to specific components.

Automated Tools: Automated dependency management tools allowed the team to stay ahead of version conflicts and library updates.

Insights for Application:

Aerospace companies that rely on both legacy and modern systems should consider implementing automated tools to manage dependencies and decouple critical systems to reduce risks when introducing new features.

Case Study 3: Healthcare Sector – Kaiser Permanente: Modernizing Healthcare Record Systems with CI/CD

Background:

Kaiser Permanente, one of the largest healthcare providers in the U.S., operates a vast electronic healthcare record (EHR) system built over decades. The system includes legacy components written in MUMPS, an outdated but reliable language for healthcare systems, as well as modern services built in Java and Python. The healthcare industry is heavily regulated, and any disruptions to the EHR system can have serious consequences for patient care.

Challenge:

Kaiser Permanente’s development teams needed to ensure that any updates to the modern EHR components didn’t break the legacy MUMPS-based systems. Additionally, the organization faced challenges with continuous integration and deployment (CI/CD) for both legacy and modern systems.

Solution:

Kaiser Permanente implemented a GitLab CI/CD pipeline that supported both legacy and modern components. They created a separate pipeline for testing the legacy MUMPS code, while the modern components went through a typical unit test and deployment cycle.

CI for Legacy Systems: For the MUMPS system, the team developed automated testing scripts that could be integrated into the CI/CD pipeline.

Microservices Architecture: Kaiser Permanente decoupled parts of their system using a microservices architecture, allowing modern services to interact with the legacy system through APIs.

Cross-Team Code Reviews: Code reviews were conducted collaboratively between teams working on modern and legacy systems, ensuring that changes in one part of the system didn’t affect the other.

Key Takeaways:

Microservices and CI: Decoupling the EHR system into microservices allowed Kaiser Permanente to implement CI/CD for individual services without affecting the legacy system.

Automated Testing: By automating tests for the legacy MUMPS system, Kaiser Permanente reduced the likelihood of breaking critical healthcare functionality.

Insights for Application:

Healthcare organizations can implement CI/CD pipelines for both legacy and modern systems by decoupling components and automating tests for critical legacy code. This ensures that new features can be deployed without compromising existing systems.

Case Study 4: Energy and Utilities Sector – Shell: Collaborative Code Reviews for Legacy Monitoring Systems

Background:

Shell, a global energy company, has been using legacy systems to monitor oil pipelines and energy production for decades. These systems, written in FORTRAN and C, have become deeply entrenched in their operations. However, as Shell moves toward renewable energy sources, they are also introducing modern analytics platforms built on Python and Spark to monitor real-time data from wind and solar energy sources.

Challenge:

Shell needed to ensure collaboration between teams maintaining legacy systems and teams developing new systems. However, the teams were siloed, and there was little cross-team collaboration, leading to inefficiencies and delays.

Solution:

Shell adopted collaborative code review practices that encouraged legacy maintainers and modern developers to review each other’s code. They also implemented version control workflows that allowed cross-team code reviews and discussions around potential system impacts.

Unified Version Control: Shell migrated all of its legacy and modern systems to a single Git-based version control system, which allowed developers from different teams to work together more effectively.

Cross-Team Code Reviews: Code reviews were no longer siloed within teams; instead, they were assigned to a mix of developers from both the legacy and modern teams.

Key Takeaways:

Cross-Functional Collaboration: By bringing together teams working on legacy and modern systems, Shell ensured that changes in one part of the system were understood and accounted for in the other.

Code Reviews as a Knowledge Sharing Tool: Code reviews allowed legacy system maintainers to learn from modern developers and vice versa, improving the overall quality of the codebase.

Insights for Application:

Energy and utilities companies can implement cross-functional code reviews to bridge the gap between legacy maintainers and modern development teams, encouraging collaboration and knowledge sharing.

Case Study 5: Telecom Sector – AT&T: Continuous Integration for Hybrid Systems

Background:

AT&T, a major telecommunications company, operates one of the world’s largest telecom infrastructures. Their legacy systems, built in C and Perl, manage core telecom services, while modern systems built on Java and Go handle newer services such as 5G. With the rollout of 5G, AT&T needed to ensure that its legacy systems could support modern infrastructure without breaking.

Challenge:

As AT&T scaled its 5G services, it became increasingly difficult to test new features without affecting legacy systems that were still in use for millions of customers. Moreover, the company needed to ensure that updates to the 5G infrastructure didn’t disrupt the legacy systems handling older services.

Solution:

AT&T implemented CI/CD pipelines that allowed for the integration and testing of both legacy and modern components. They also used feature flagging to roll out new features incrementally, reducing the risk of breaking legacy services.

CI for Hybrid Systems: AT&T set up a CI pipeline that ran tests for both modern and legacy systems in parallel. This allowed them to ensure that changes in one part of the system didn’t negatively impact other parts.

Feature Flagging for Incremental Rollouts: AT&T used feature flags to deploy new 5G features to a small subset of users while monitoring the impact on legacy systems. This allowed them to roll out updates incrementally, ensuring stability.

Key Takeaways:

Feature Flagging: By using feature flags, AT&T was able to deploy new features without risking the stability of its legacy systems.

CI for Legacy Systems: Integrating legacy systems into the CI pipeline allowed AT&T to ensure that new 5G features could be tested without breaking older services.

Insights for Application:

Telecom companies can benefit from integrating legacy systems into their CI pipelines, using feature flagging to roll out new features incrementally and ensuring that modern updates don’t disrupt critical legacy services.

Conclusion

These case studies demonstrate the importance of managing version control, dependencies, collaboration, and CI/CD in ultra-large, hybrid codebases. By applying these principles, companies in various sectors can improve code quality, ensure system stability, and streamline collaboration between teams working on legacy and modern systems.

Managing ultra-large codebases, especially when dealing with both legacy and modern components, is a complex but solvable challenge. By adopting modern version control practices, decoupling dependencies using dependency injection, conducting rigorous code reviews, and implementing automated CI/CD pipelines, organizations can improve the maintainability, scalability, and performance of their systems.

This part of the newsletter series has covered the foundational aspects of structuring ultra-large codebases. In the next part, we will dive deeper into performance optimization, security, and reliability—all of which are crucial for operating large-scale systems.

Feel free to leave your comments and questions below! I would greatly appreciate your thoughts and feedback on this case study. Please share your comments below, or if you just want to say hi, connect with me on LinkedIn, Twitter, Reddit, or via email at arunsingh.in@gmail.com.

I am currently seeking opportunities as an SRE, DevOps, Platform Engineering, Infrastructure Engineering, Performance Engineering, Cloud Economics, and Architecture projects, as well as Freelance gigs! Please contact me if you are interested in collaborating on projects or working together.✨

Let’s have an impact together!